About

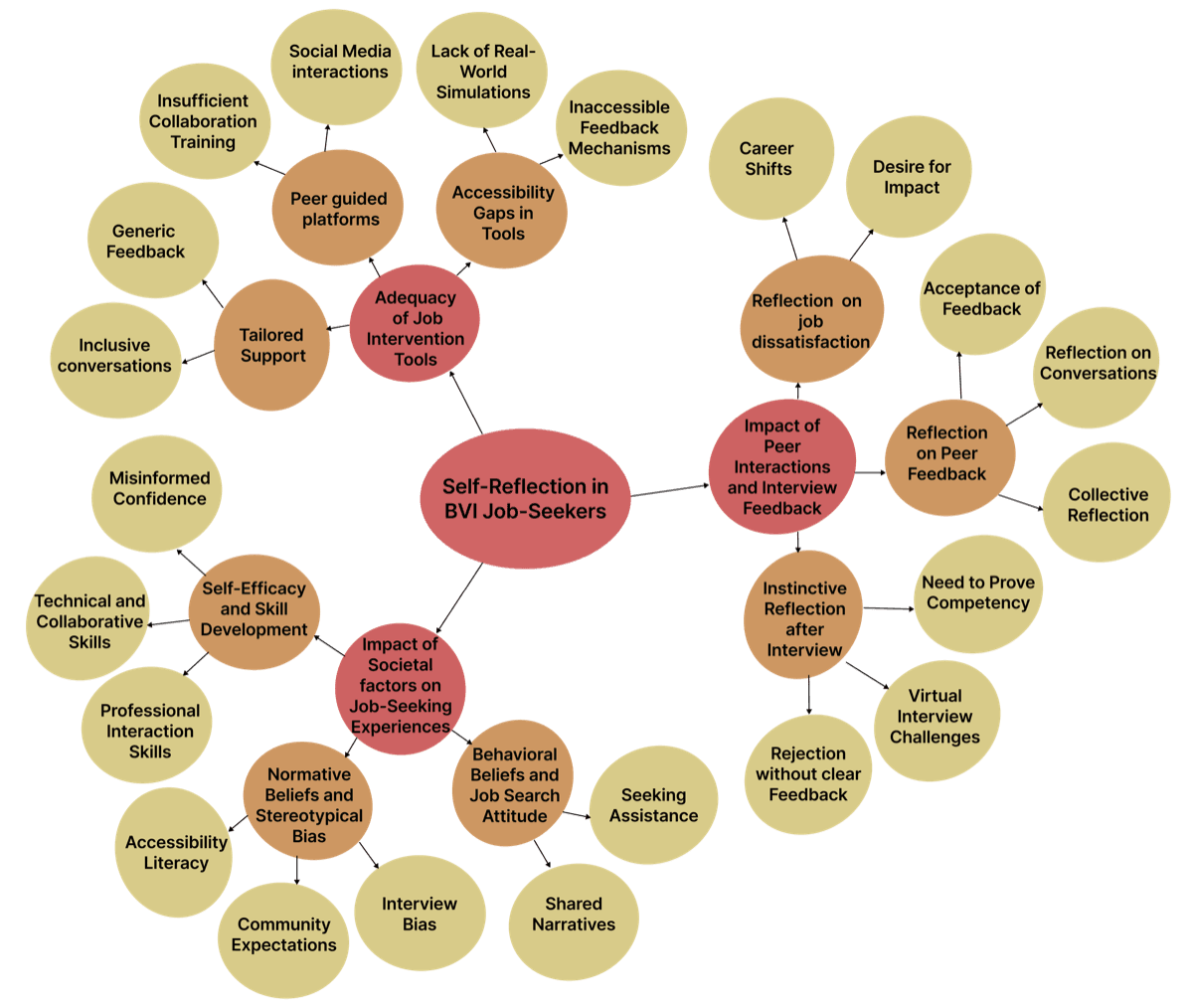

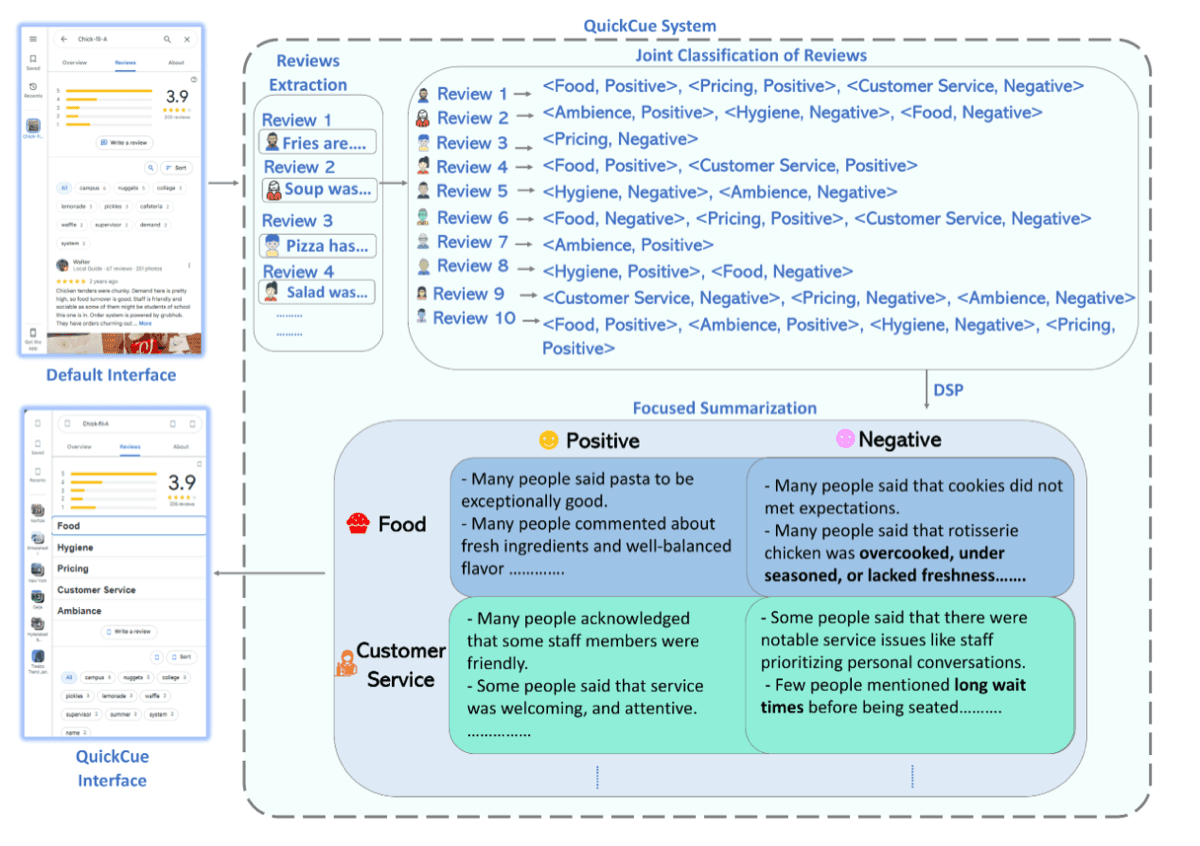

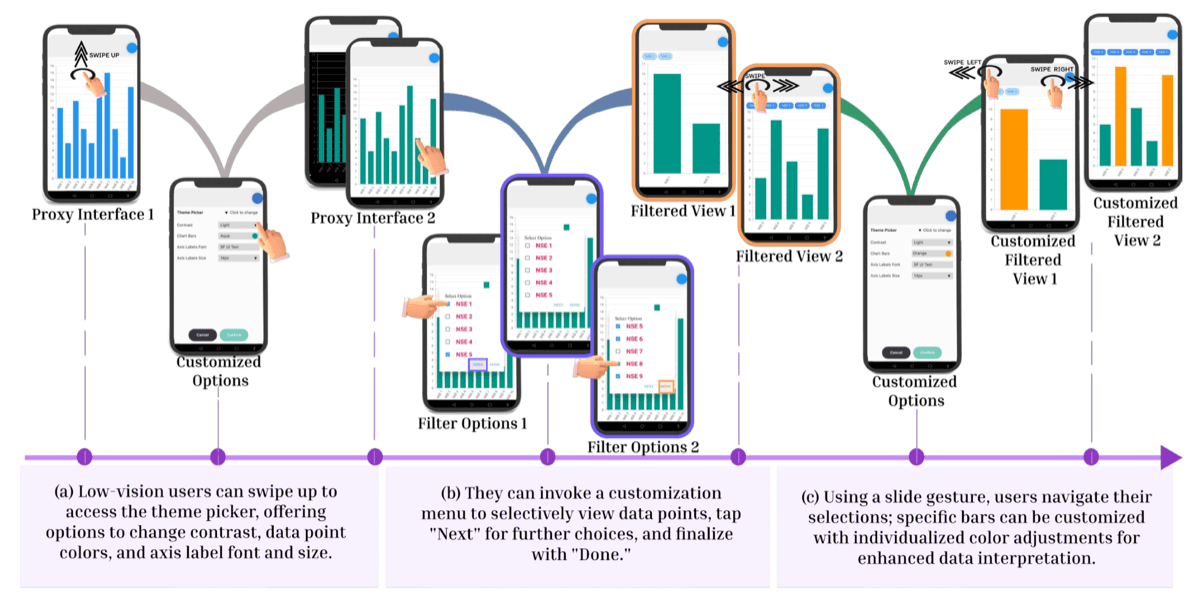

Akshay is a researcher and engineer based in Norfolk, Virginia, currently pursuing a Ph.D. at Old Dominion University in the Accessible Computing Lab, advised by Dr. Vikas Ashok. His interdisciplinary work bridges Human-Centered AI, Accessibility, Usability, Eye Tracking, and Social Computing, with a focus on developing intelligent solutions to improve the usability and accessibility of digital technologies. He has conducted research across various domains, including data visualizations, e-commerce platforms, user-generated content (such as discussion forums and reviews), web archives, and social computing systems. His most recent research focuses on adapting technologies originally developed in affluent settings to support reliable information access for resource-constrained populations, and on designing eye-tracking-based solutions to enhance the usability of dynamic digital content for individuals with low vision. His work has been published in top-tier HCI venues such as CHI, CSCW, SIGCSE TS, IEEE VIS (TVCG), IJHCI, ASSETS, ICMI, and EICS (PACMHCI).

Akshay earned his bachelor's degree from Visvesvaraya Technological University (VTU). Before beginning his doctoral studies, he worked as a Research Assistant at the HandsOn Lab and as a Software Engineer at the London Stock Exchange Group (LSEG) and BetaNXT.

I am actively seeking internship opportunities for 2026.